Wire Formats for High-Volume Service Communication

Every microservice architecture faces a fundamental question: how should services encode data when communicating? The answer matters enormously at scale. When you’re …

Methodically crawling through caches, concurrency, and chaos...at a pace slower than drying paint

Every microservice architecture faces a fundamental question: how should services encode data when communicating? The answer matters enormously at scale. When you’re …

Financial markets generate petabytes of data daily: price ticks, order book updates, news feeds, earnings reports, social media sentiment, and macroeconomic indicators. Traditional …

Consider a financial analytics platform serving real-time portfolio metrics to thousands of clients. Traditional row-oriented storage loads entire transaction records into …

Consider a social media platform with 8 million users facing a content moderation bottleneck: hate speech reports take 4-6 hours to reach moderators, and at scale—120,000 reports …

In 2017, a fraud detection startup discovered their Python-based neural network inference was creating a hidden cost: 200 milliseconds of latency per transaction. At their …

Caching is a classical systems technique that reduces access latency by retaining temporary replicas of data closer to the point of consumption. A typical deployment comprises a …

The Nordic power market is one of the world’s most liquid and sophisticated electricity markets, trading over 500 TWh annually across Norway, Sweden, Finland, and Denmark. …

In high-frequency trading (HFT), microseconds determine profitability. When an arbitrage opportunity appears—say, a 0.01% price discrepancy between two exchanges—it vanishes within …

Part 6 of 6 | ← Part 5: Implementation Emerging AI Capabilities The architectures described in this article represent the current state of the art, but fundamental limitations …

Part 5 of 6 | ← Part 4: Ethics & Safety | Part 6: Advanced Topics → Case Study: Feed Ranking Architecture The following synthesizes public disclosures from major platforms …

Part 4 of 6 | ← Part 3: Production Systems | Part 5: Implementation → Ethical Considerations Recommendation systems shape public discourse and individual well-being. Responsible …

Part 3 of 6 | ← Part 2: Ranking | Part 4: Ethics & Safety → Evaluation and Metrics Recommendation systems require rigorous evaluation across offline, online, and long-term …

Part 2 of 6 | ← Part 1: Architecture | Part 3: Production Systems → Ranking Models The ranking stage scores candidates with a model trained to predict user engagement. Unlike …

Part 1 of 6 | Part 2: Ranking & Re-ranking → Introduction Social media platforms generate value by connecting users with content they find engaging. The recommendation …

Modern social media platforms serve billions of users with sub-second latency requirements while handling massive write throughput and complex relationship graphs. This article …

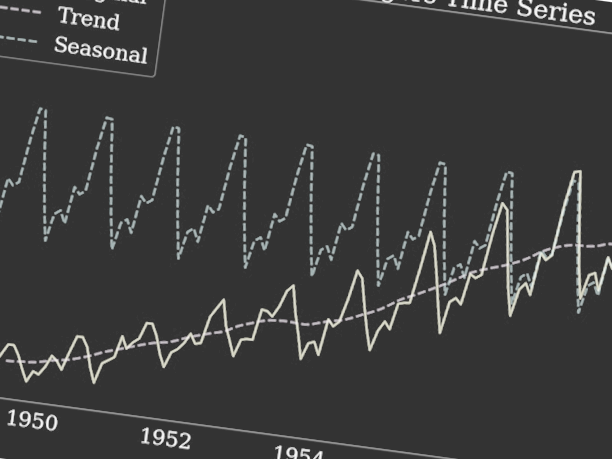

Time series databases (TSDBs) are specialized storage engines optimized for time-stamped data at massive scale. Unlike general-purpose databases, TSDBs exploit temporal locality, …

Introduction As modern systems evolve toward distributed, highly parallel architectures, traditional concurrency techniques—locks, mutexes, and shared memory—quickly reveal their …

In 2019, a quantitative trading firm discovered their portfolio risk calculation was bottlenecked not by algorithmic complexity, but by memory access patterns. By restructuring …

Financial institutions require precise, auditable, and performant valuation of derivative portfolios. This article presents the design and implementation of a domain-specific …